Eclipse PanEval provides a unified, vendor-neutral framework to evaluate AI models for capability, safety, and cybersecurity in line with EU regulations.

In-scope:

- A three-dimensional evaluation framework based on "Capacity – Task – Metrics"

- Coverage of 4 major model categories: language, multimodal, and speech models

- Support for several evaluation tasks including task solving, coding, multi-turn QA, factuality, image-text QA, depth estimation, speech perception, and more

- Safety & robustness evaluation as a cross-cutting dimension across all model types

- Alignment with EU AI Act and CRA compliance requirements

- AI-assisted subjective evaluation to improve efficiency and objectivity

- Open leaderboard and evaluation platform (https://flageval.baai.ac.cn)

Out-of-scope:

- Model training or fine-tuning

- Deployment infrastructure for production AI systems

- Legal compliance certification (Eclipse PanEval provides evaluation tooling, not legal advice)

| Repository | Commits | Reviews | Issues |

|---|

The EMO oversees the lifecycle of Eclipse projects, trademark and IP management, and provides a governance framework and recommendations on open source best practices.

See the project’s PMI page at https://projects.eclipse.org/projects/technology.paneval

TruereadThis project has a total of 2 repositories in its associated GitHub organization eclipse-paneval

.

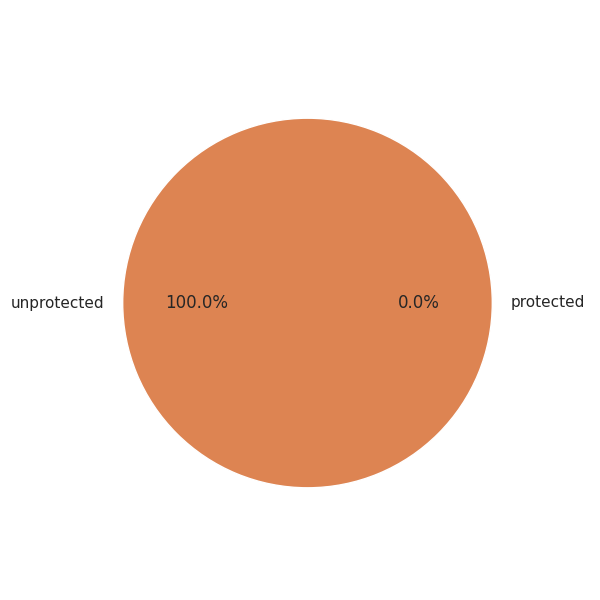

The graph below outlines the percentange of repositories that have either defined a Branch Protection Rule or Repository Ruleset: